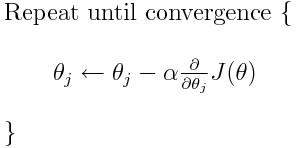

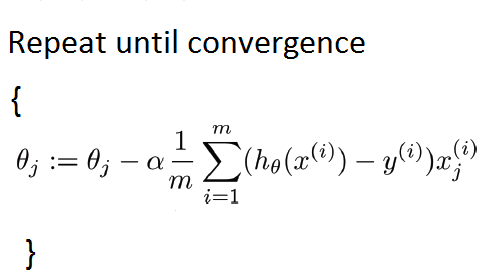

MathType - The #Gradient descent is an iterative optimization #algorithm for finding local minimums of multivariate functions. At each step, the algorithm moves in the inverse direction of the gradient, consequently reducing

Por um escritor misterioso

Last updated 31 março 2025

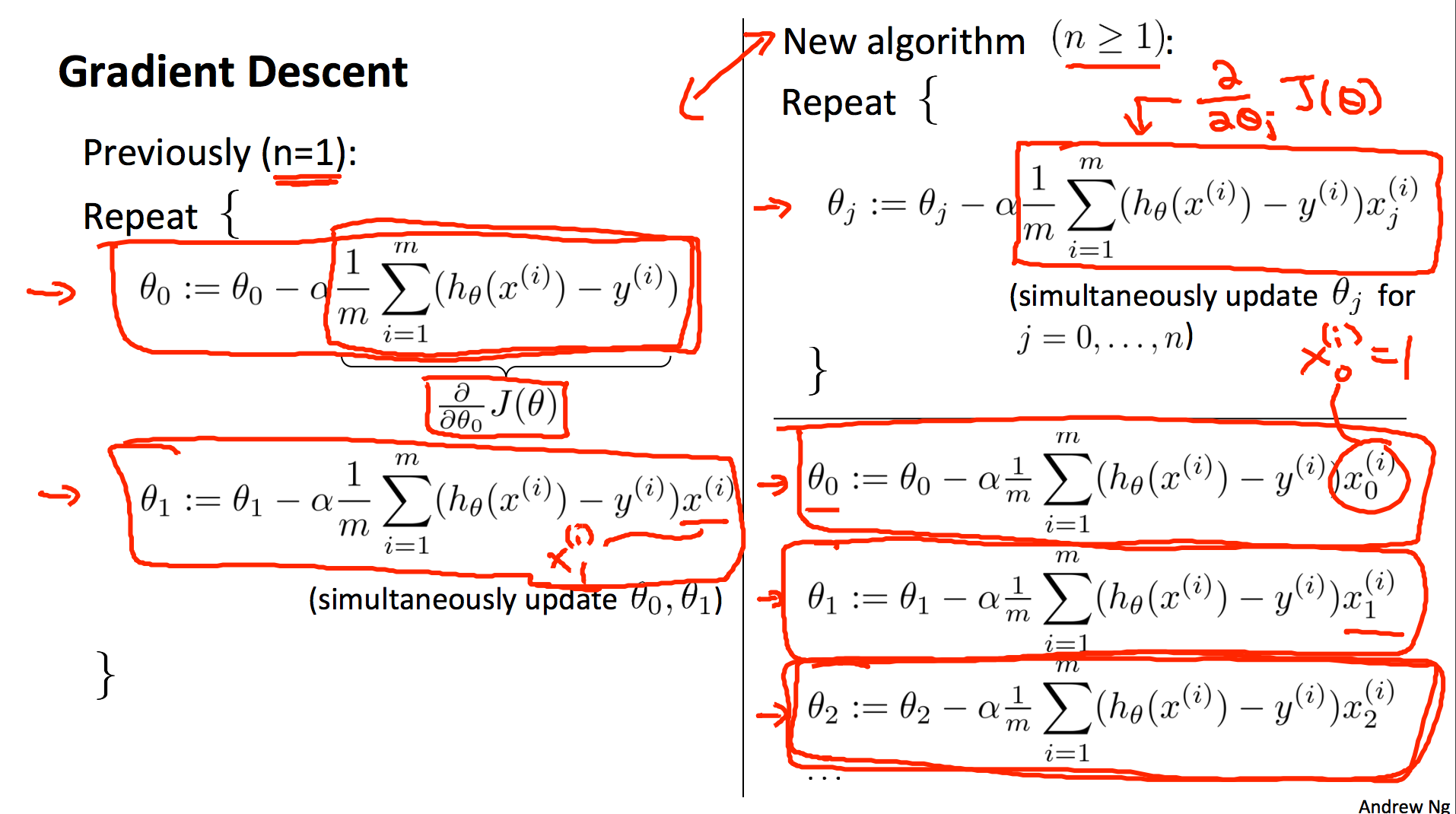

L2] Linear Regression (Multivariate). Cost Function. Hypothesis. Gradient

Gradient Descent algorithm. How to find the minimum of a function…, by Raghunath D

Gradient Descent For Mutivariate Linear Regression - Stack Overflow

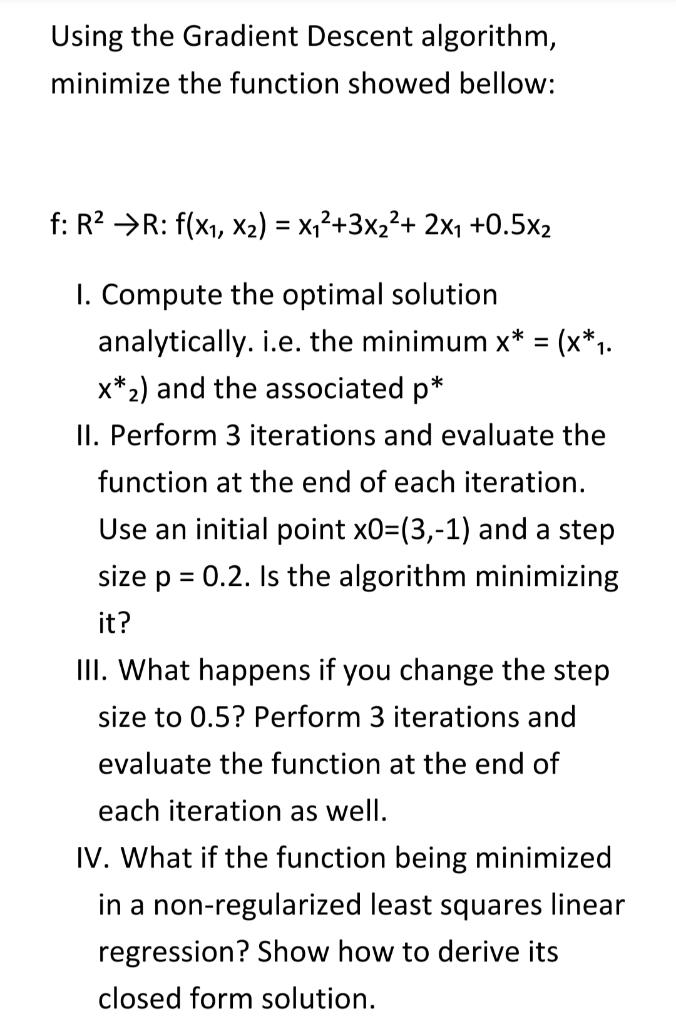

Solved Using the Gradient Descent algorithm, minimize the

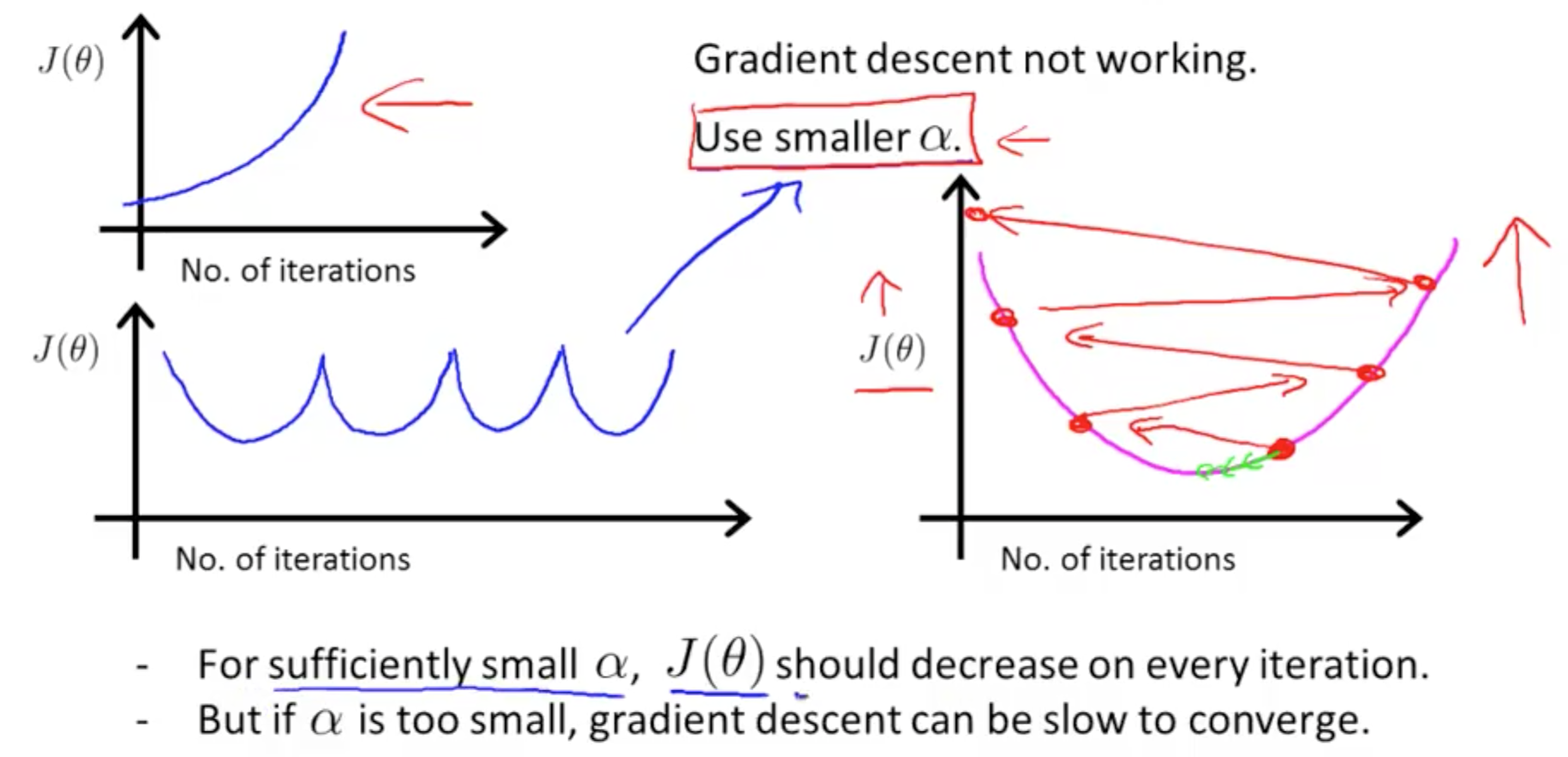

All About Gradient Descent. Gradient descent is an optimization…, by Md Nazrul Islam

Linear Regression with Multiple Variables Machine Learning, Deep Learning, and Computer Vision

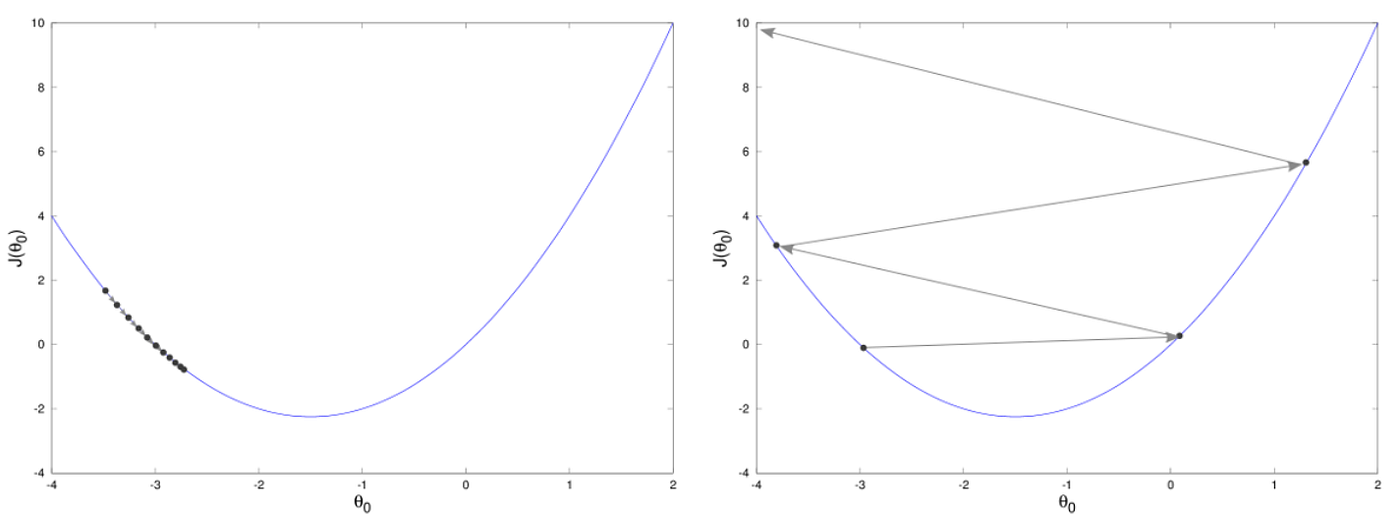

Gradient Descent Algorithm

Optimization Techniques used in Classical Machine Learning ft: Gradient Descent, by Manoj Hegde

Gradient Descent algorithm. How to find the minimum of a function…, by Raghunath D

All About Gradient Descent. Gradient descent is an optimization…, by Md Nazrul Islam

Gradient Descent Algorithm in Machine Learning - GeeksforGeeks

Gradient Descent Algorithm in Machine Learning - Analytics Vidhya

How to figure out which direction to go along the gradient in order to reach local minima in gradient descent algorithm - Quora

Recomendado para você

-

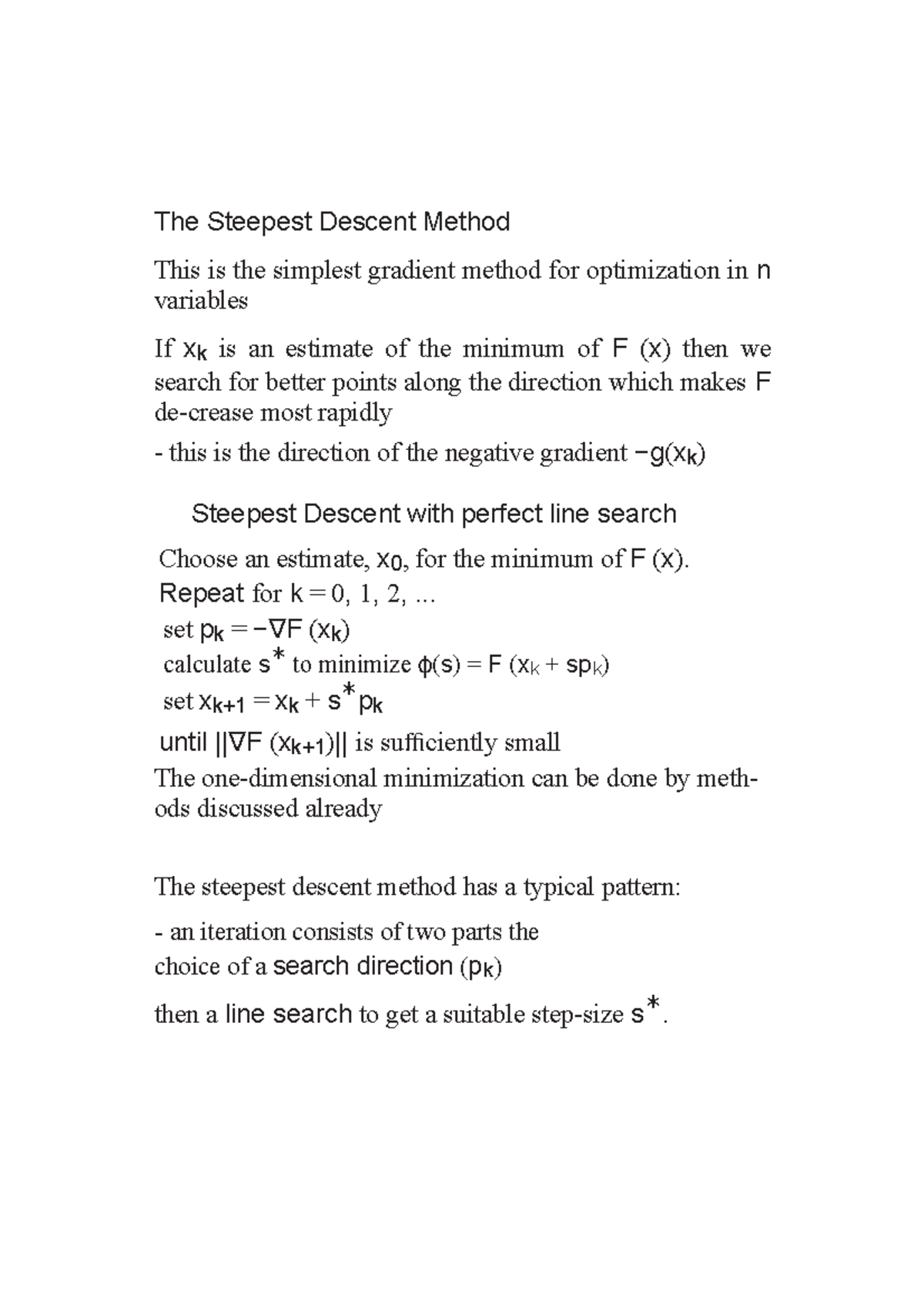

Method of steepest descent - Wikipedia31 março 2025

-

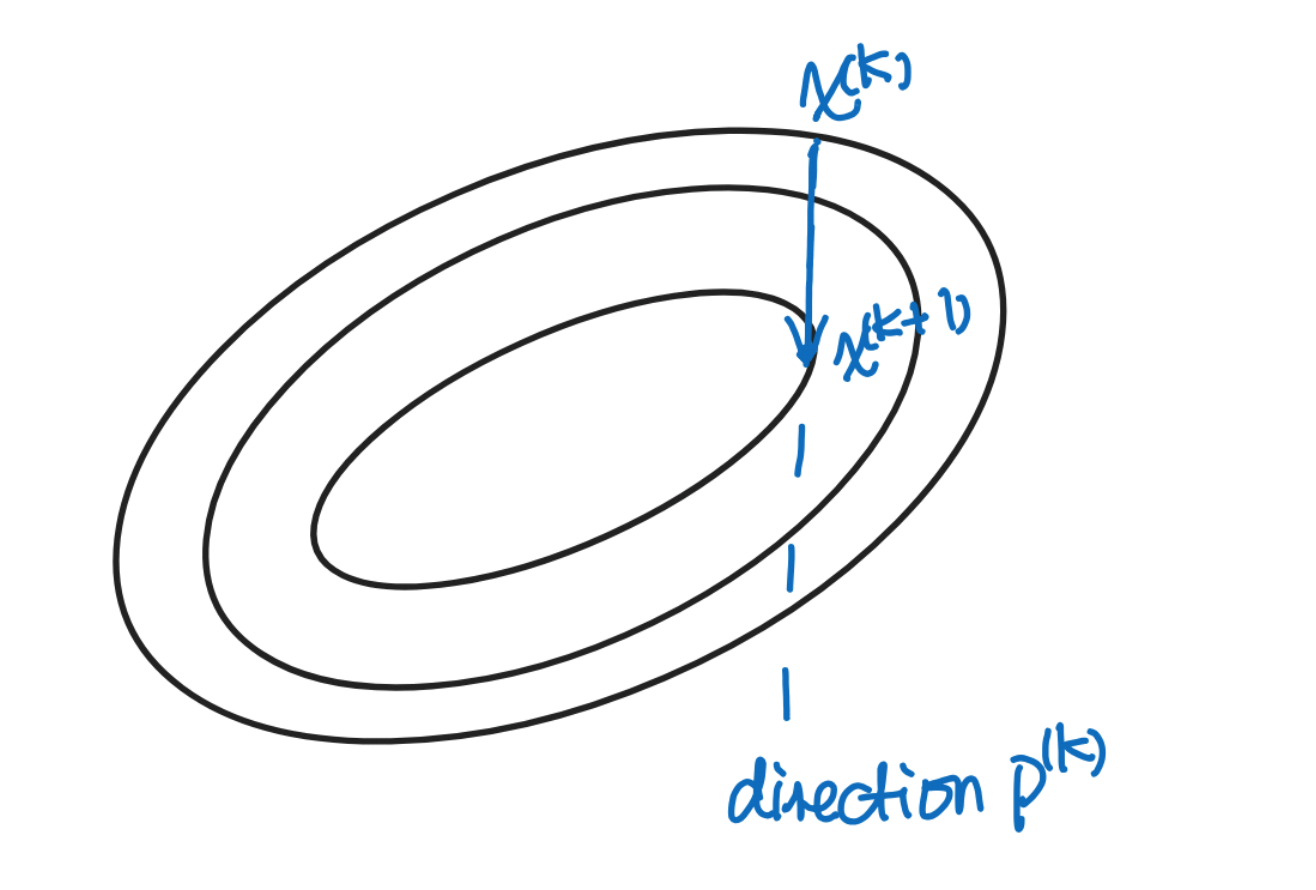

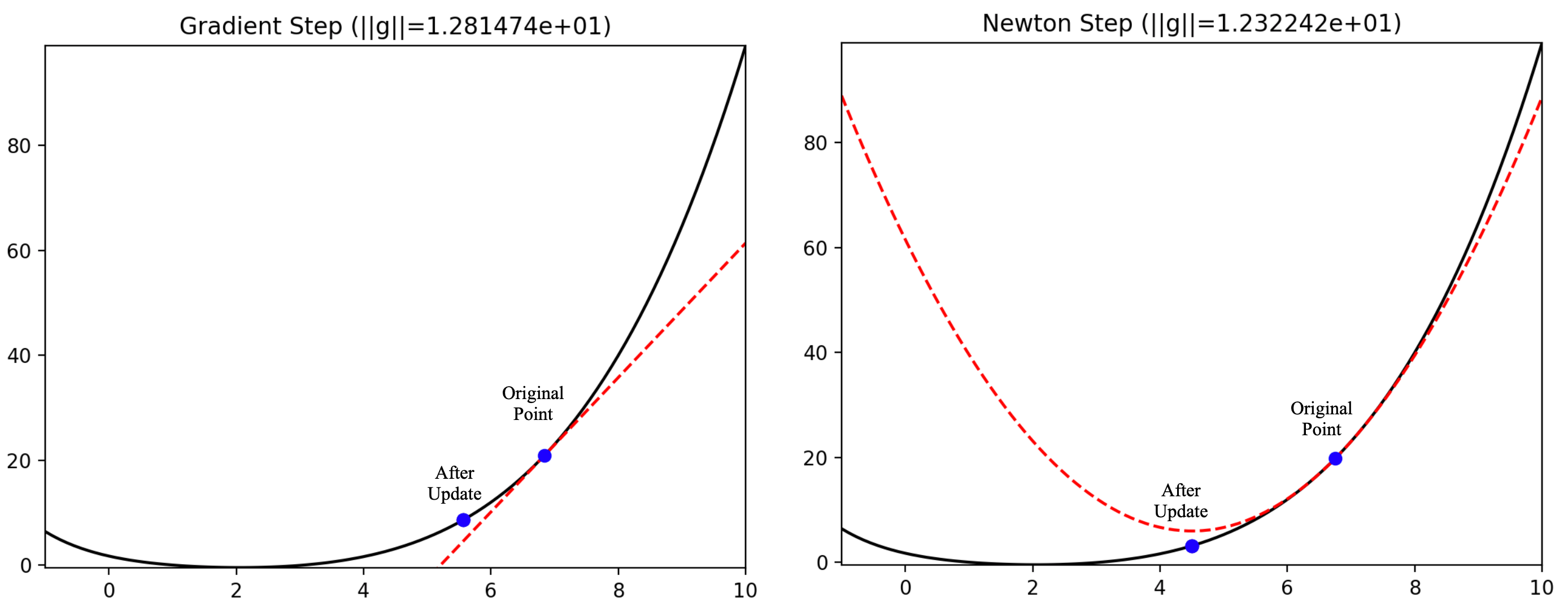

Descent method — Steepest descent and conjugate gradient, by Sophia Yang, Ph.D.31 março 2025

Descent method — Steepest descent and conjugate gradient, by Sophia Yang, Ph.D.31 março 2025 -

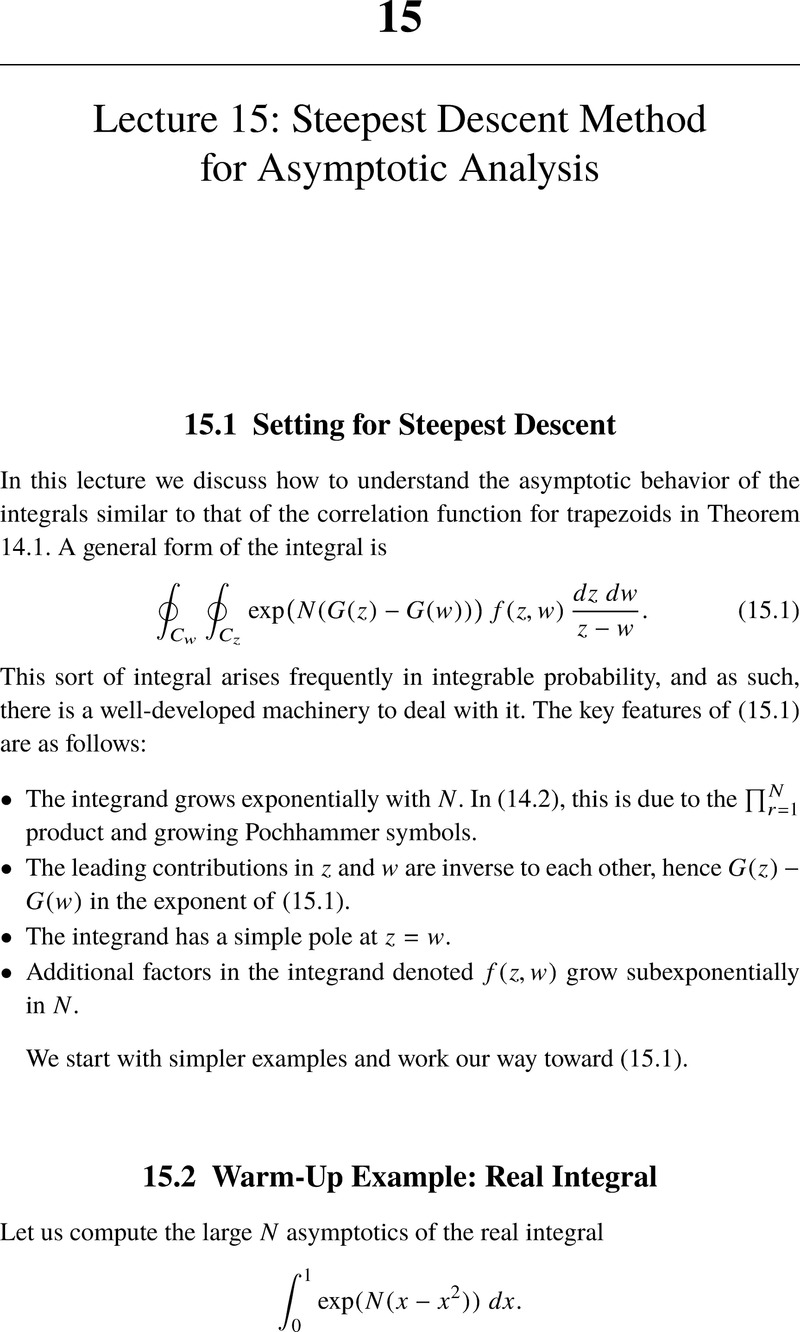

Lecture 15: Steepest Descent Method for Asymptotic Analysis (Chapter 15) - Lectures on Random Lozenge Tilings31 março 2025

Lecture 15: Steepest Descent Method for Asymptotic Analysis (Chapter 15) - Lectures on Random Lozenge Tilings31 março 2025 -

Guide to gradient descent algorithms31 março 2025

Guide to gradient descent algorithms31 março 2025 -

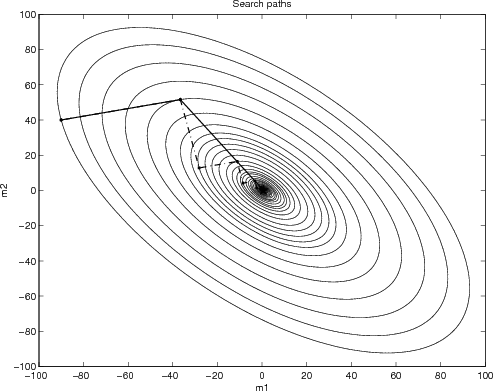

Why steepest descent is so slow31 março 2025

Why steepest descent is so slow31 março 2025 -

Lecture 7: Gradient Descent (and Beyond)31 março 2025

Lecture 7: Gradient Descent (and Beyond)31 março 2025 -

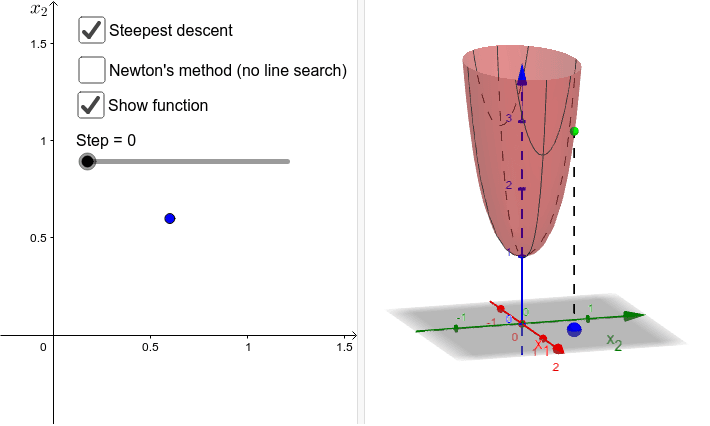

Steepest descent vs gradient method – GeoGebra31 março 2025

Steepest descent vs gradient method – GeoGebra31 março 2025 -

Steepest Descent - an overview31 março 2025

Steepest Descent - an overview31 março 2025 -

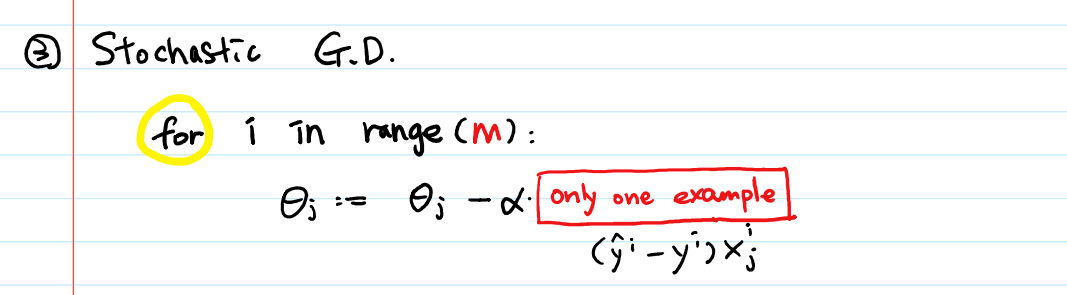

3 Types of Gradient Descent Algorithms for Small & Large Data Sets31 março 2025

3 Types of Gradient Descent Algorithms for Small & Large Data Sets31 março 2025 -

The Steepest Descent Method - Summary - The Steepest Descent Method This is the simplest gradient - Studocu31 março 2025

The Steepest Descent Method - Summary - The Steepest Descent Method This is the simplest gradient - Studocu31 março 2025

você pode gostar

-

FIFA 23 será lançado em 30 de setembro para PS5, PS4, Xbox Series, Xbox One, PC e Stadia - GameBlast31 março 2025

FIFA 23 será lançado em 30 de setembro para PS5, PS4, Xbox Series, Xbox One, PC e Stadia - GameBlast31 março 2025 -

![Sly 2 New Game Plus [Sly 2: Band of Thieves] [Mods]](https://images.gamebanana.com/img/ss/mods/61dcd48b491a3.jpg) Sly 2 New Game Plus [Sly 2: Band of Thieves] [Mods]31 março 2025

Sly 2 New Game Plus [Sly 2: Band of Thieves] [Mods]31 março 2025 -

bear PNG transparent image download, size: 620x752px31 março 2025

bear PNG transparent image download, size: 620x752px31 março 2025 -

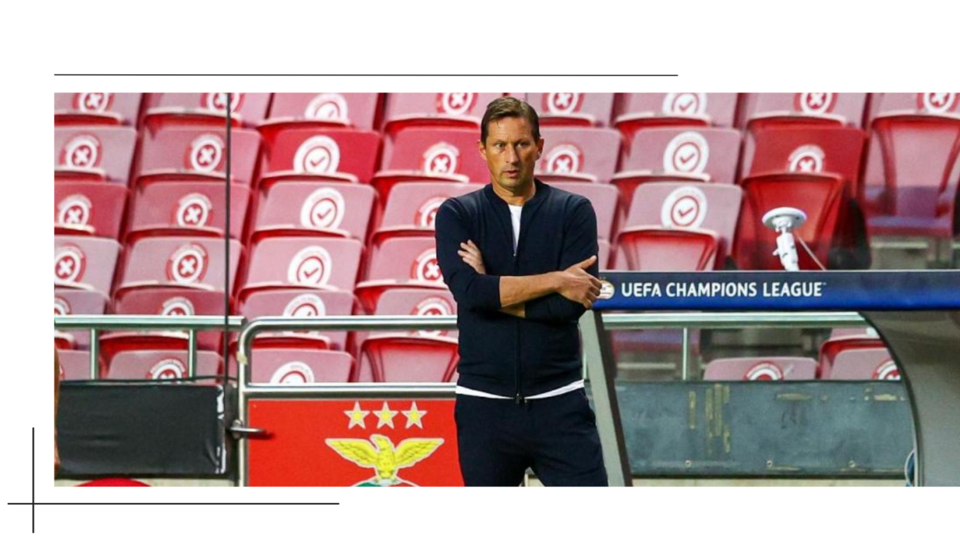

Roger Schmidt no Benfica - Visão do Peão31 março 2025

Roger Schmidt no Benfica - Visão do Peão31 março 2025 -

Dragon Ball Z (English Dub) Power of the Spirit - Watch on Crunchyroll31 março 2025

-

Street Fighter II Victory31 março 2025

-

Song Lyrics to, “Michael Booth” - Booth Brothers31 março 2025

Song Lyrics to, “Michael Booth” - Booth Brothers31 março 2025 -

Will Champion, dos Coldplay, desenhou um pastel de nata na bateria: “É maravilhoso estar de volta a Portugal” - Expresso31 março 2025

-

AOK Coach Hire Carrickmacross31 março 2025

-

Fire Png Gif - IceGif31 março 2025

Fire Png Gif - IceGif31 março 2025